Introduction

Plan International Canada is a humanitarian and development organisation advancing children’s rights and equality for girls in over 80 countries. Through its global child sponsorship program, Plan connects sponsored children and supporters through personal letters that form the foundation of long‑term, trust‑based relationships. Last year, the organisation’s work ensured that girls were healthy, safe and educated, reaching more than 4 million girls and nearly 8 million children overall. It currently supports over 100,000 sponsored children worldwide.

To protect children and ensure safe, appropriate communication, every letter exchanged between a child and a sponsor must undergo safeguarding and content review. To strengthen consistency, reduce operational strain, and maintain the child’s authentic voice at scale, Plan International Canada implemented an AI‑assisted safeguarding and content‑vetting solution to support and not replace human review.

The solution is now embedded in Child Communications Team workflows and supports consistent safeguarding checks, clearer prioritisation of risk, and continuous monitoring of AI recommendations versus human outcomes. Ongoing oversight, governance, and tuning, ensure the system remains safe, transparent, and aligned with humanitarian and child‑protection principles.

Background

Plan International Canada’s Child Communications team reviews more than 3,000 sponsor–child and child–sponsor letters each month. Each letter must be checked to ensure:

- Safeguarding and child‑protection standards are met

- No inappropriate personal, sensitive, or harmful content is included

- Sponsorship identifiers are correct

- Cultural nuance and authenticity of the child’s voice are preserved

This review process is essential but highly manual and time‑intensive. Prior to AI support, reviewers spent a significant portion of their time reading and scanning letters end‑to‑end to identify potential risks, even when no issues were present. As communication volumes grew and expectations for timely donor engagement increased, this approach created operational bottlenecks and variability in how safeguarding rules were applied across reviewers.

The core challenge was to improve consistency and efficiency in the reading and initial processing of letters without compromising child safety, ethics, or authenticity.

AI Development

Why AI?

Plan International Canada explored AI as a way to support high‑volume, rules‑driven safeguarding tasks that require consistency and careful attention to edge cases. AI was selected to assist with:

- Applying safeguarding rules consistently across all letters

- Highlighting potential risks for human review

- Reducing repetitive reading and scanning effort during peak volumes

AI was intentionally designed as decision support, not automation. Human reviewers remain responsible for all final decisions.

Co‑Design and Stakeholder Engagement

The solution was co‑designed with cross‑functional teams to ensure safeguarding, privacy, and operational realities were embedded from the outset:

- Child Communications Team (primary users)

- Safeguarding specialists

- IT, DevOps, and Security

- Data Governance and AI oversight

- Legal and privacy advisors

- Global Head Office and cross‑national-office child‑data advisors

Direct consultation with children was not appropriate given safeguarding considerations; instead, child‑protection experts and established safeguarding policies guided all design decisions. All stakeholder consultations were addressed in the planning and solutioning phases of the project – as early as possible to capture appropriate requirements

Design and Development Process

The team followed a structured, iterative approach:

- Exploration & Feasibility – Assessed whether AI could safely support safeguarding checks and where human judgment must remain mandatory.

- Data Preparation – Used historical approved and rejected letters to understand decision patterns and refine rejection criteria.

- AI Configuration – Configured the AI to analyse letter text, flag safeguarding risks, identify inappropriate themes, and surface clear rejection reasons while avoiding hallucinated or invented content.

- Iterative Testing – Conducted side‑by‑side comparisons with manual decisions, tested edge cases, and validated outputs with safeguarding specialists. Initial rounds of development testing incorporated large amounts of samples from previous months were injected into the process for preliminary tuning.

- Observability & Auditability – Implemented logging of AI outputs, human overrides, processing times, and rejection categories.

- Human‑in‑the‑Loop Oversight – All AI recommendations require human approval; the AI never edits or rewrites a child’s words.

- Ownership & Governance – Child Communications Team owns day‑to‑day use, with ongoing governance and monitoring by Data Governance and AI oversight teams.

Humanitarian Principles and Ethics

Throughout the project, humanitarian principles and child-safeguarding ethics guided every design decision. The team adopted a risk-first approach, prioritising child safety over automation speed by preferring false rejects over false accepts and ensuring all AI outputs remained subject to mandatory human review. Safeguarding specialists and cross-office privacy advisors were consulted to shape rejection criteria, define sensitive or harmful content, and ensure cultural nuance was respected. Specific design pivots such as distinguishing factual vs. persuasive religious references, excluding OCR-generated “image” artifacts to avoid inappropriate flags, and relying on ID-based rather than name-based validation were made to prevent harm and uphold fairness. The solution was further shaped by a comprehensive DPIA, strict data-governance controls, metadata-only retention, and clear auditability measures, ensuring compliance with privacy requirements and responsible handling of child data. Together, these efforts ensured the AI system enhanced safeguarding while reinforcing Plan International Canada’s ethical obligations to protect children and preserve the authenticity of their voices.

Exciting and Challenging Aspects of the Process

One of the most exciting aspects of the project was watching the interdisciplinary collaboration deepen as the team confronted real safeguarding, linguistic, and operational complexities that do not surface in theoretical design. As testing progressed, the team had to navigate challenging issues such as OCR inaccuracies, ambiguous historical rejections, and the difficulty of distinguishing sensitive content like factual versus persuasive religious references. Several design pivots such as shifting from name-matching to ID-driven validation, refining image detection to avoid OCR artifacts, and introducing synthetic letters when real safeguarding samples were limited required thoughtful debate and quick adaptation. These moments highlighted both the promise and the limitations of AI in humanitarian workflows, reinforcing the importance of governance clarity, precise examples, human-in-the-loop review, and a risk-first approach.

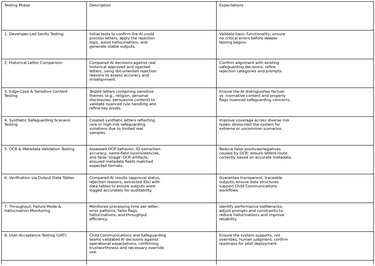

Testing the AI Application throughout the Development Process Testing Phase Description Expectations

Ownership

Ownership of the AI application was assigned to the Child Communications team, given that they manage the day-to-day vetting, approval, and safeguarding workflow for all child letters. Discussions during development emphasised that operational ownership needed to remain with the team closest to children’s communications, while long-term oversight including data governance, privacy controls, and safeguarding integrity would be jointly supported by Data Governance, Legal/Privacy, and Safeguarding specialists. This shared-responsibility model emerged through cross-team consultations, which highlighted the importance of maintaining human authority over final decisions, ensuring compliance with child-data policies, and providing sustained governance as the system evolves.

Key Design Debates and Pivots

Several design decisions evolved through testing and discussion:

- Religion: Shifted from rejecting all mentions to distinguishing factual references from persuasive or normative content.

- Identifiers: Prioritised Child ID and Sponsor ID over names due to optical character recognition (OCR) variability.

- Images: Excluded OCR artifacts (e.g., the word “image”) while continuing to flag references to photos or attachments.

- Language Scope: Limited AI evaluation to English content; letters without English translations are flagged for manual handling.

- Testing Strategy: Supplemented limited real safeguarding samples with synthetic test letters.

These pivots reflect a deliberate, risk‑first approach favoring conservative safeguards over aggressive automation.

How the AI Works (Lay Description)

The system uses AI to read the text of letters and compare them against predefined safeguarding and content rules. It produces a recommendation (approved or not approved), identifies specific reasons for concern, and assigns confidence indicators.

Human reviewers then review the letter alongside the AI’s findings and make the final decision.

Operationalising AI

Use in Practice

The AI‑assisted workflow is used daily by the Toronto‑based Child Communications team to support:

- Safeguarding risk detection

- Identification of sensitive or inappropriate content

- Alerts for translation inconsistencies

- Summaries of complex letters

Users

Primary users include Child Communications processing staff and safeguarding specialists, supported by IT and Data Governance teams.

Early Outcomes and Impact

Based on early operational use and observation, the AI‑assisted approach has materially reduced the time reviewers spend reading and initially processing letters end‑to‑end. While precise metrics continue to be monitored and refined, initial estimates indicate:

- Approximately 25-40% reduction in manual reading and scanning effort per letter during the initial review stage

- More consistent identification of safeguarding‑related risks across reviewers

- Increased ability for staff to focus time on complex, sensitive, or borderline cases

These impacts are continuously monitored through comparison of AI recommendations and final human decisions, with particular attention to deviations caused by edge cases.

User Support

Child Communications, IT, and Data Governance jointly support the solution. A defined hyper‑care and steady‑state support model includes close monitoring of discrepancies between AI recommendations and human decisions, prompt tuning, and validation of reporting outputs.

Challenges Encountered

Key challenges included:

- Initial trust in AI recommendations

- Early hallucinations, addressed through tighter constraints

- OCR inaccuracies affecting extraction

- Cultural nuance limitations requiring continued human judgment

Learnings

What Worked Well

- Governance‑first design

- Continuous collaboration between technical and safeguarding experts

- Early identification and testing of edge cases

- Treating AI as support, not replacement

What We Would Do Differently

- Involve cultural and linguistic advisors earlier

- Invest more upfront in AI literacy training

- Expand access to real safeguarding examples sooner

Issues Still Being Addressed

- Further reducing hallucinations

- Improving OCR accuracy

- Ongoing trust‑building and change management

Changes Already Made

- Tighter AI constraints and prompts

- Enhanced observability and reporting

- Clearer rejection logic and prioritisation

Plans for the Future

Monitoring and Impact

Ongoing monitoring includes:

- Comparison of AI recommendations with final human decisions

- Tracking false positives and false negatives

- Monitoring processing time and volumes

- Regular review of governance, access controls, and safeguards

Scaling and Enhancements

Near‑term plans include full Canadian deployment and continued tuning. Medium‑ and long‑term plans include:

- Scaling to additional National Offices

- Adding image analysis capabilities

- Expanding multilingual evaluation

- Periodic audits and governance reviews

Alignment with the Wider Sector

This work reflects challenges common across the humanitarian sector, particularly in child protection and safeguarding‑critical processes where high volumes, sensitive content, and ethical risk intersect. The approach demonstrates how AI can be used responsibly to support and not replace human judgment in safeguarding contexts, offering transferable lessons for organisations facing similar communication and review challenges.

Financial Sustainability

The solution is designed to be financially sustainable by embedding it into existing operations, minimising per‑letter processing costs, and maintaining clear ownership within Child Communications Team. Ongoing cost monitoring and optimisation are built into the governance and operational model.

Where to Learn More

For questions or collaboration inquiries, please contact:

Jennifer Pattison, Director of Data and AI

Plan International Canada